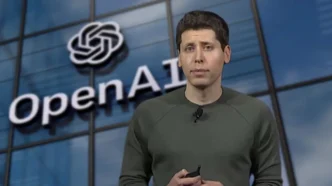

As generative AI opens new doors for innovation, it’s also equipping hackers with sharper tools. From deepfake CEO calls to fabricated invoices, social engineering attacks have entered a more dangerous era — and OpenAI is stepping up to fight back.

In its first-ever cybersecurity investment, OpenAI’s Startup Fund has co-led a $43 million Series A round for Adaptive Security, a New York-based AI startup that simulates realistic, AI-generated cyberattacks. The round also included participation from Andreessen Horowitz, a longtime backer of AI-driven ventures.

Founded in 2023, Adaptive Security helps companies combat the surge of AI-powered scams by mimicking attacks like fake voice calls, texts, and emails. Think of a scenario where your CTO calls requesting a one-time passcode — except it’s not them, but an AI-generated spoof. Adaptive’s platform creates these training exercises to expose vulnerabilities and teach employees to spot suspicious activity before it’s too late.

Training Humans to Outsmart AI Scams

What makes Adaptive stand out is its laser focus on human error, particularly social engineering attacks that trick staff into clicking malicious links or sharing sensitive data. These low-tech yet highly effective breaches have cost companies hundreds of millions — including the infamous $600 million Axie Infinity hack in 2022, triggered by a fake job interview.

Adaptive aims to flip the script by using AI to simulate these very scams, then train employees to identify and avoid them. Beyond realistic voice calls, the system also scores the riskiest departments in an organization and delivers tailored training to fortify weak points.

CEO and co-founder Brian Long says generative AI has made traditional phishing and scam tactics much harder to detect. “It’s no longer about spotting a spelling error in an email — it’s your boss’s voice calling you directly,” Long told TechCrunch.

A Proven Founder and a Growing Market

Brian Long is no stranger to building successful startups. He previously founded TapCommerce, a mobile ad company acquired by Twitter for over $100 million, and Attentive, a messaging platform that hit a $10 billion valuation in 2021. His strong track record helped Adaptive secure early traction — now boasting over 100 corporate clients in less than two years.

This early success caught OpenAI’s attention. According to Long, the backing of current users and their feedback played a big role in attracting OpenAI to the cap table. For OpenAI, investing in cybersecurity is a natural progression as the generative AI ecosystem matures — and threats evolve alongside it.

Fighting AI with AI: A Growing Trend

Adaptive joins a wave of startups tackling the dark side of AI. Just recently, Cyberhaven raised $100 million at a $1 billion valuation, focusing on preventing staff from leaking data into tools like ChatGPT. Snyk, a developer security platform, also cited AI-generated code risks as a driver of its $300M+ ARR. Meanwhile, GetReal, which detects deepfakes, landed $17.5 million last month to expand its offering.

With this new funding, Adaptive Security plans to expand its engineering team and strengthen its platform to stay ahead in what Long calls an “AI arms race” between defenders and bad actors. His advice for employees concerned about voice spoofing? “Delete your voicemail.”